As organizations race to adopt artificial intelligence, a practical question follows: how do you govern something that evolves this quickly? Many organizations find themselves caught between the urgency of AI adoption and the need to deploy responsibly. Fortunately, if you are already familiar with the NIST Cybersecurity Framework (CSF), you have a strong head start – the NIST Artificial Intelligence Risk Management Framework (AI RMF) builds naturally on that foundation.

The frameworks share overlapping concepts and complementary approaches. While the CSF has a broader scope covering all aspects of cybersecurity, the AI RMF concentrates specifically on the emerging risks presented by artificial intelligence technologies. At their core, both frameworks were developed to help organizations manage risk – and increasingly, those risk domains are converging.

How are the NIST CSF and the AI RMF Structured?

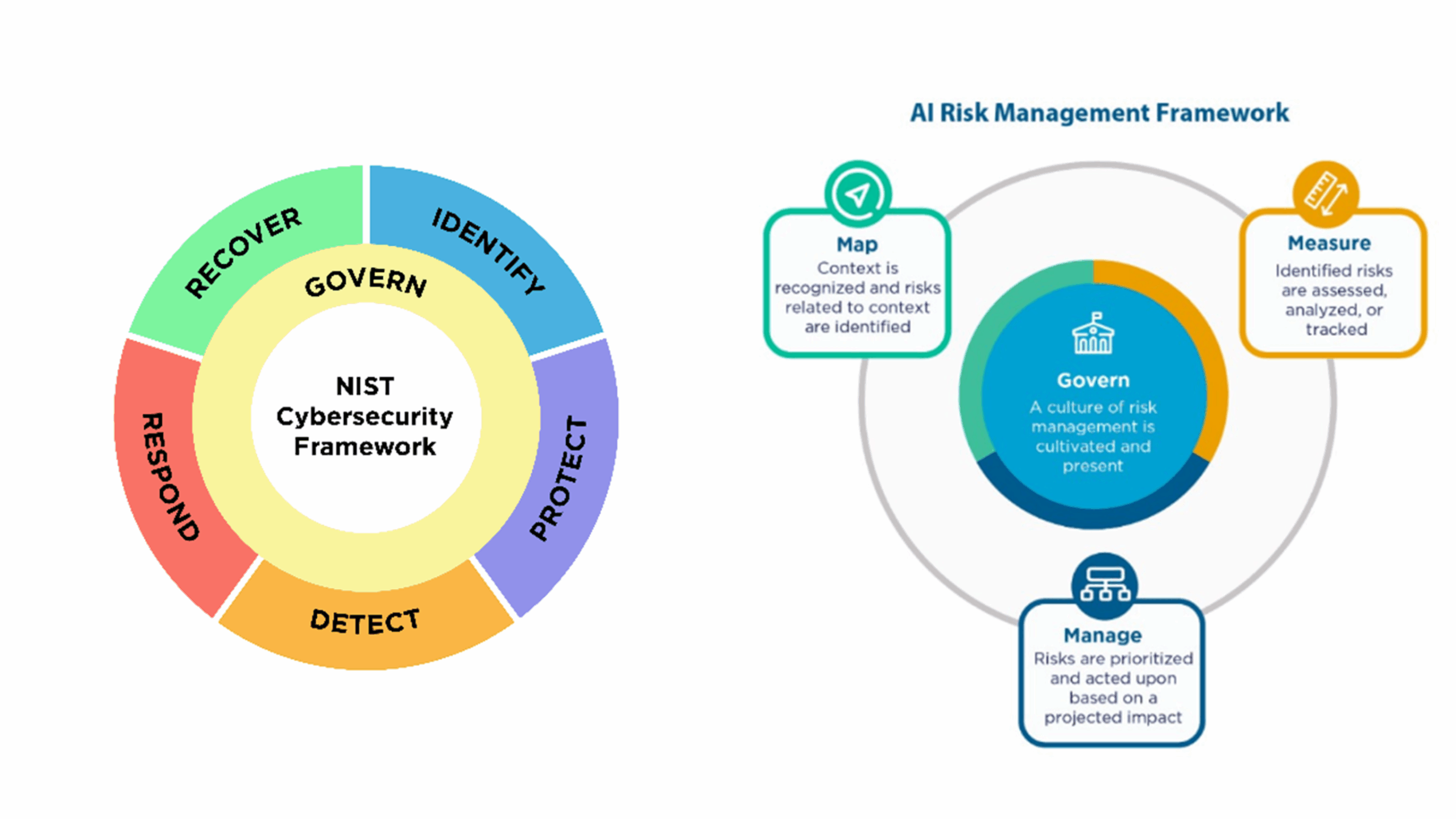

The CSF 2.0 – last updated in February 2024 – consists of six core functions: Govern, Identify, Protect, Detect, Respond, and Recover. The addition of “Govern” as a formal function was a significant evolution, elevating cybersecurity from a technical concern to a strategic, enterprise-wide priority.

Meanwhile, the AI RMF 1.0 – released in January 2023 – is built on four foundational functions: Govern, Map, Measure, and Manage. These functions are designed to help organizations identify, assess, and mitigate risks associated with AI systems throughout their lifecycle. Each function comprises various categories and subcategories, providing a providing a structured approach to AI risk management and trustworthy AI development.

While the naming conventions of the frameworks may suggest different approaches, closer inspection reveals they are similar and complementary. Organizations already using the CSF will find familiar territory in the AI RMF.

What is the Key Similarity Between the NIST CSF and the AI RMF?

Both frameworks emphasize the importance of governance and incorporating business context. “Govern” is the common thread that runs through both. The CSF’s Govern function focuses on establishing governance structures for managing cybersecurity risks aligned with business objectives, while the AI RMF’s Govern and Map functions emphasize the alignment of AI risk management with organizational goals and values.

The goals of these functions are the same across both frameworks, though the CSF has more of a regulatory compliance orientation, while the AI RMF incorporates stronger themes of transparency, ethics, and societal impact. This distinction matters: AI governance requires expertise that merges technical knowledge with ethical and societal awareness – accountability for model outputs can be difficult to define, especially with complex systems like generative AI and large language models.

How Do the Frameworks Help Identify AI and Cybersecurity Risks?

Both frameworks offer methods for posture assessment. The CSF uses the Identify function to develop an understanding of business context, assets, and risks across the enterprise. The AI RMF uses the Map and Measure functions to describe AI system context and identify potential risks – including bias, transparency issues, and accountability gaps.

The AI RMF goes further in defining specific risk categories unique to AI, which became even more detailed with the release of the Generative AI Profile (NIST AI 600-1) in July 2024. This companion document identifies twelve categories of risks specific to generative AI, including hallucinations, information integrity concerns, intellectual property issues, and chemical, biological, radiological and nuclear (CBRN) information risks.

How Do the Frameworks Approach Ongoing Risk Management?

Both frameworks recognize ongoing monitoring and management as essential to a resilient program. The CSF uses Protect, Detect, Respond, and Recover for continuous monitoring and improvement. This concept is consolidated into a single function in the AI RMF – Manage – which is designed to assess AI systems and address risks throughout their lifecycle.

This lifecycle approach is particularly important for AI. Unlike traditional software, AI systems evolve after deployment through continuous learning, real-world feedback, and interactions with dynamic environments – making AI a living system that requires continuous governance rather than a “set-it-and-forget-it” deployment. The AI RMF addresses this directly, helping organizations create repeatable, auditable, and lifecycle-grounded practices.

What Are the Key Differences Between the NIST CSF and the AI RMF?

Despite the philosophical overlap, there are key differences worth noting:

- Scope: The CSF is deliberately broad, covering all aspects of cybersecurity across any organization type. The AI RMF is specifically tailored to AI systems and references unique technical challenges like algorithmic bias, explainability, and model vulnerabilities.

- Ethical Emphasis: The AI RMF places a stronger emphasis on ethical considerations and societal impacts, which are less prominent in the CSF. This includes considerations around fairness, accountability, and potential harms to individuals and communities.

- Stakeholder Engagement: The AI RMF’s Map function explicitly calls for identifying and engaging with a wide range of stakeholders (including affected communities) which is less emphasized in the CSF.

- Flexibility and Profiles: Both frameworks are designed to be flexible, but the AI RMF has invested heavily in use-case profiles that organizations can use to model specific applications, industry settings, or requirements. The Generative AI Profile is just one example; NIST continues to develop additional profiles for specific AI applications and sectors.

The Convergence of Cybersecurity and AI Risk

NIST recognized this convergence in December 2025 with the release of its preliminary draft Cybersecurity Framework Profile for Artificial Intelligence (Cyber AI Profile), coded NIST IR 8596. This new resource explicitly bridges the CSF 2.0 and AI RMF, acknowledging that AI introduces both new cybersecurity risks and new defensive capabilities. The Cyber AI Profile centres on three focus areas: securing AI systems from cyber attacks, using AI to enhance cyber defence operations, and building resilience against AI-enabled threats. The public comment period closed in January 2026, and NIST plans to release an initial public draft later this year. This development signals that organizations can no longer treat cybersecurity and AI governance as separate disciplines.

Alongside the Cyber AI Profile, NIST is developing SP 800-53 Control Overlays for Securing AI Systems (COSAiS), which will provide implementation-level guidance to help organizations operationalize AI-related controls. COSAiS covers five proposed use cases: generative AI, predictive AI, single-agent AI systems, multi-agent AI systems, and AI developer controls. It has already progressed from an initial concept paper to discussion drafts for stakeholder review. Together, these initiatives demonstrate NIST’s commitment to creating a cohesive ecosystem of frameworks, profiles, and controls.

The Evolving Regulatory Context

While both frameworks remain voluntary, their influence on regulatory expectations is growing. Federal agencies, sector regulators, and industry bodies increasingly reference the NIST AI RMF in their compliance and governance standards. The framework is widely used as a technical companion for EU AI Act compliance, and multinational companies are adopting NIST as the operational layer beneath their regulatory compliance programs.

In August 2025, NIST released an update to SP 800-53 Release 5.2.0 – its foundational catalogue of security and privacy controls – which introduced new controls and enhancements focused on the security and reliability of software updates and patches. While not AI-specific, these controls reflect NIST’s broader commitment to modernizing its security guidance alongside new AI-focused initiatives. The AI RMF is currently under revision, with NIST expected to release version 1.1 guidance addenda, expanded profiles, and more granular evaluation methodologies through 2026. Organizations that align with these frameworks today will be better positioned as regulatory requirements continue to evolve.

Conclusion: A Unified Approach to Risk

The NIST CSF and AI RMF share a common structure and philosophy. Organizations dealing with both cybersecurity and AI risks should consider implementing both frameworks, leveraging their similarities while addressing the unique risks associated with each domain.

The release of the Cyber AI Profile, the advancing COSAiS overlays, and the forthcoming AI RMF revision all signal that NIST views these frameworks as part of an integrated ecosystem – one that will continue to mature through 2026 as new guidance on agentic AI systems and sector-specific profiles takes shape. Organizations that ground their governance in these frameworks will have a significant head start, both in managing risk today and in adapting as the regulatory picture gets clearer.

Through our Govern 360 and AI Govern 360 offerings, ISA Cybersecurity delivers expert guidance that helps you work through the challenges and opportunities that AI brings to Canadian organizations like yours. With our cross-industry experience, we can help you demystify the security requirements and best practices for AI governance. Contact us today to learn more.