If you lead risk, compliance, or information security at a Canadian financial institution, OSFI Guideline E-23 – Model Risk Management (MRM) – is one of your most immediate AI governance obligations. Effective May 1, 2027, it applies to all federally–regulated financial institutions (FRFIs) and sets out formal expectations for how AI and machine learning models should be governed across the enterprise. This article covers what E-23 actually requires, where institutions are finding the hardest implementation gaps, what OSFI will look for when they assess compliance, and where to focus your program now.

Setting the Stage: A Unique Challenge

The challenge is that for most organizations, AI adoption is already ahead of AI governance. Unlike cloud, where risk frameworks could be designed before wide deployment, AI arrived differently – through the front door, the side door, and every window in between. ChatGPT reached 100 million users in two months, a pace no enterprise technology had seen before, and the dynamic inside financial institutions followed the same pattern. By the time risk and security teams were formalizing governance conversations, employees were already using AI tools, embedding them in workflows, and in some cases feeding sensitive data into unsanctioned models. Shadow AI is not an edge case. For most organizations, it is the starting condition – and it is the condition E-23 compliance must be built on top of.

Nitin Bedi, Vice President, Services, ISA Cybersecurity“My father-in-law is 85 years old. He's never once asked me about cloud security. But last month, he wanted a ChatGPT demo – and to get it installed on his iPhone. That tells you everything about how differently this wave of technology has landed”

What Does E-23 Actually Require?

OSFI’s Guideline E-23 defines a “model” broadly: any structured analytical method, including AI/ML methods, that takes input data, applies processing logic, and generates results meaningful to business or control functions. That definition captures far more than most teams initially appreciate – from traditional actuarial and credit models to AI capabilities quietly embedded in productivity software. The MRM framework OSFI expects institutions to establish covers nine areas: model inventory, risk classification, change controls, approval processes, monitoring, model lifecycle management, reporting, governance structure, and third-party model risk. Any risk or compliance professional will recognize the discipline – it is the same structure applied to any material operational asset, now formalized specifically for models.

A point that CROs and CISOs must note: E-23 explicitly expects material model risk to be reported to the board of directors. That has implications for how risk functions structure their reporting cadence and what fluency they need from board risk committees on AI and model risk topics. It also means model governance cannot live solely in a technical or operational function – it requires visible executive accountability.

E-23 also links directly to Guideline B-10, OSFI’s third-party risk management framework. A vendor’s SOC 2 report alone is not sufficient. FRFIs must define precisely where vendor accountability and responsibility ends and their own begins – and many AI vendors do not yet have validation and reporting capabilities that meet E-23 standards. Institutions that assume vendor compliance will find themselves exposed.

For institutions operating in Quebec, the AMF published a parallel Model Risk Management Guideline in June 2025; where the two differ, the stricter standard applies.

The Inventory Problem

Model inventory is one of the most consistently cited challenges in E-23 implementation – and it does not get easier over time. The initial pass requires visibility into areas that have historically operated independently: business units, finance functions, operations teams, and an increasingly complex vendor ecosystem. Most institutions can account for the models they know about – core financial models, formally approved AI deployments, and AI tools that went through procurement. The gap is what lies beneath that: the AI capabilities they did not approve, did not notice, and may have limited means to detect.

An under-appreciated challenge is that vendors are enabling AI features inside existing platforms through routine updates, bypassing the procurement and security reviews that would normally flag a net-new tool. We call this “+AI”. While organizations should be watching for employees who seek out novel AI applications, the more likely risk is familiar business software quietly toggling on AI/ML functionality between renewal cycles. The practical response is implementing vendor governance checkpoints specifically designed to catch AI capabilities embedded in existing contracts – not just net-new procurement.

When mapping the AI landscape for inventory purposes, consider three primary categories – and a fourth dimension that applies to all of them:

- Existing platforms with AI layered on – software already in use where the vendor has enabled AI/ML features, often through a routine update. These are the hardest to catch because no new procurement occurs

- Purpose-built AI solutions – custom-built agents, models, or applications developed internally or procured specifically to deliver an AI capability. These typically go through governance processes, but not always.

- Third-party SaaS AI applications – standalone tools or services adopted for a specific function or business need.

The fourth dimension is sanctioned versus unsanctioned use within each of those categories. Shadow AI – employees adopting AI-powered tools independently without IT or security review – is the pattern most likely to create material exposure.

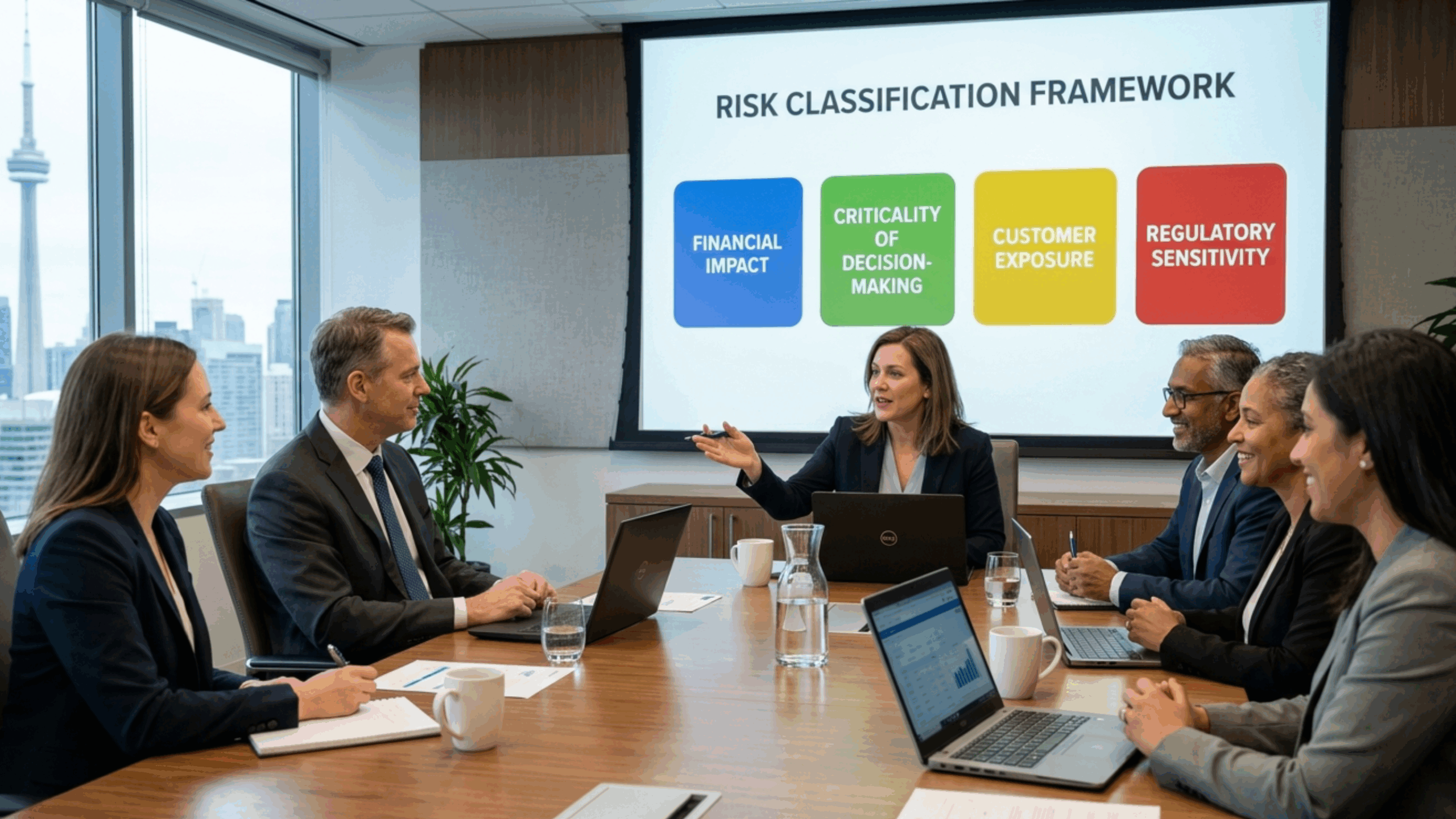

Risk Classification: Not All Models Are Equal

A defensible MRM framework requires a risk-tiered view of the model inventory rather than applying the same level of scrutiny to everything. This tiering is what makes governance operationally sustainable – without it, full lifecycle review requirements become unmanageable at scale. Four dimensions are particularly useful when risk-rating models under E-23:

- Financial impact: Does the model influence financial inputs, outputs, or capital calculations?

- Criticality of decision-making: To what extent do business outcomes depend on the model’s output?

- Customer exposure: Does the model access or return customer data, or influence what customers receive? And crucially, does it create a “use” of customer data not covered by existing privacy consents.

- Regulatory sensitivity: Does the model’s use trigger privacy, compliance, or other regulatory considerations?

Applying these criteria consistently across business units – using standardized scoring rather than line-of-business judgment – is what makes the risk ratings defensible both internally and to OSFI.

Data Governance and Identity: The Underlying Foundation

Any model produces outputs only as sound as the data it accesses. Without structured data classification, access controls, and identity and access management, model governance is incomplete. This is where information security and model risk functions converge, and where many FRFIs remain underinvested. Security budgets are still weighted heavily toward perimeter and endpoint controls, with the data layer receiving a fraction of the spend – despite being the layer that AI/ML models directly exploit. Unstructured data warrants particular attention: genAI applications increasingly operate over documents, emails, and internal repositories that are least likely to be properly classified and protected.

E-23 does not stand alone here. OSFI’s Guideline E-21 – on operational risk management and resilience, with data risk management as a core component – has been binding since August 2024, with full compliance required by September 2026. Institutions building strong data governance foundations are simultaneously making progress on multiple OSFI fronts.

AI-Specific Incident Response

One area consistently underdeveloped in early-stage AI governance programs is incident response. AI/ML failures are not conventional IT failures – they can involve gradual model drift, subtle hallucinations, biased outputs, or adversarial manipulation through prompt injection. Each requires detection capabilities and response playbooks specific to AI behavior rather than general IT incident protocols. OSFI’s expectations extend to monitoring, remediation, and documentation of model issues across the full model lifecycle. It’s important not to assume that your traditional IR plans will suit the unique AI landscape. Institutions that have built governance frameworks but not AI-specific detect-and-respond capabilities have a gap OSFI will find.

How Will OSFI Assess Compliance?

E-23 is principles-based guidance, not a prescriptive rulebook – and that distinction has real implications for how institutions should approach compliance. OSFI will not arrive with a checklist. What they will look for is evidence that an institution has genuinely engaged with the intent behind each expectation, not just whether a policy document exists. The question OSFI is effectively asking is not “do you have this?” but “why did you approach it this way, what risk were you managing, and how do you know it’s working?” Institutions that treat E-23 as a tick-box exercise tend to find, when OSFI pushes on the details, that they have documentation without substance. The regulator is deliberately not prescriptive – the intent is for institutions to apply genuine judgment to their own risk profile, not to follow a template.

Starting from established frameworks rather than building bespoke helps here, and is what OSFI itself expects. The NIST AI Risk Management Framework – Govern, Map, Measure, Manage – and ISO 42001 both provide defensible foundations that OSFI’s own guidance draws upon. Mapping your MRM framework to E-23 from a proven external standard produces something more defensible, and more useful, than a framework written solely to satisfy a questionnaire.

Nitin Bedi, Vice President, Services, ISA Cybersecurity“You can’t treat E-23 like a checkbox exercise – it’s principles-based, and it comes down to judgment in how model risk is actually managed. Take AI model validation. It’s not a one-time sign-off. It’s whether the model keeps performing as expected over time, in the way it’s actually used.”

Where to Focus First

The organizations making the most tangible E-23 progress have concentrated effort on these areas before anything else:

- Data governance: Classification, access management, and protection of unstructured data is particularly important. Every other element of model governance depends on knowing what data the models are actually touching and who has access to it.

- Model inventory with a durable maintenance process: Getting a reasonable baseline inventory is the starting point, not the finish line. The harder work is building a mechanism that keeps pace with new AI deployments, silent vendor feature additions, and changing use cases across the enterprise.

- Vendor governance: Going materially beyond standard third-party risk assessments, FIs need to explicitly address how suppliers build and validate their AI/ML models, how they handle data, and how they intend to support FRFI compliance obligations over time.

- Resourcing: E-23 explicitly expects institutions to have personnel with the requisite skills and experience to manage model risk, particularly for novel AI/ML techniques. A gap here doesn’t just affect one workstream – it can weaken the entire program. Whether addressed through internal hiring, upskilling, or external partner support, appropriate staffing needs to be part of the implementation plan.

- Employee awareness: The shadow IT pattern – departmental tools built outside IT, undocumented, ungoverned, and outliving their creators – is repeating itself with AI at greater scale and with far higher data-access stakes. Educating staff on what they can and cannot do with AI tools, and why those boundaries exist, is not a soft program. It is a risk control.

The Bottom Line

Approached correctly, E-23 provides an opportunity to establish data governance that supports better decision-making, to build model oversight that reduces operational risk, and to demonstrate to OSFI a program that reflects genuine institutional consideration rather than an annual box-ticking exercise. The FIs that will fare best are not those with the most thorough documentation. They are the ones that have built the intent of the guideline into how they manage AI and model risk every day.

Working Through This?

ISA Cybersecurity works with Canadian financial institutions at every stage of AI risk and governance programs – from initial model inventory and risk classification through to MRM framework development, vendor governance assessments, and ongoing assurance. If OSFI E-23 compliance is on your agenda and you would like to talk through where your organization stands, get in touch – we’re here to help.